NLI 2020: First Workshop on Natural Language Interfaces

at ACL 2020, July 10, 2020.

Quick links (requires conference registration): [ACL workshop page] [RocketChat]

Overview

Natural language interfaces (NLIs) have been the “holy grail” of human-computer interaction and information search for decades. However, early attempts in building NLIs to databases did not achieve the expected success due to limitations in language understanding capability, extensibility and explainability, among others. The last 5 years have seen a major resurgence of NLIs in the form of virtual assistants, dialogue systems, and semantic parsing and question answering systems. The horizon of NLIs has also been significantly expanding beyond databases to, e.g., knowledge bases, robots, Internet of Things, Web service APIs, and more.

This has been driven by a number of profound revolutions: (1) In the big data era, and as digitalization continues to grow, there is a rapidly growing demand for interfaces that connect users to the ever-expanding data sources, services and devices in the computing world. NLIs represent a very promising technology to accomplish that as they provide users with a unified way to interact with the entire computing world using language, their natural way of communication, and (2) the renaissance and development of deep learning have brought us from rule and feature engineering to a world of neural architecture and data engineering, promising better language understanding, adaptability and scalability. As a result, many commercial systems like Amazon Alexa, Apple Siri, and Microsoft Cortana, as well as academic studies on NLIs to a wide range of backends have emerged in recent years.

Many research communities have been advancing NLI technologies in recent years: NLP and machine learning, data management and databases, programming language, human-machine interaction, among others. This workshop aims to bring together researchers and practitioners from related communities to review the recent advances and revisit the challenges that led to the failure of earlier NLI systems, and discuss what the remaining challenges are and what to expect in the short- and long-term future

Dates

All deadlines are 11:59 PM Pacific time.

- Workshop Paper Due Date:

April 6, 2020April 20, 2020 (deferred due to the pandemic) - Notification of acceptance:

May 4, 2020May 11, 2020 - Camera-ready papers due:

May 18, 2020May 22, 2020 - Workshop date: July 10, 2020

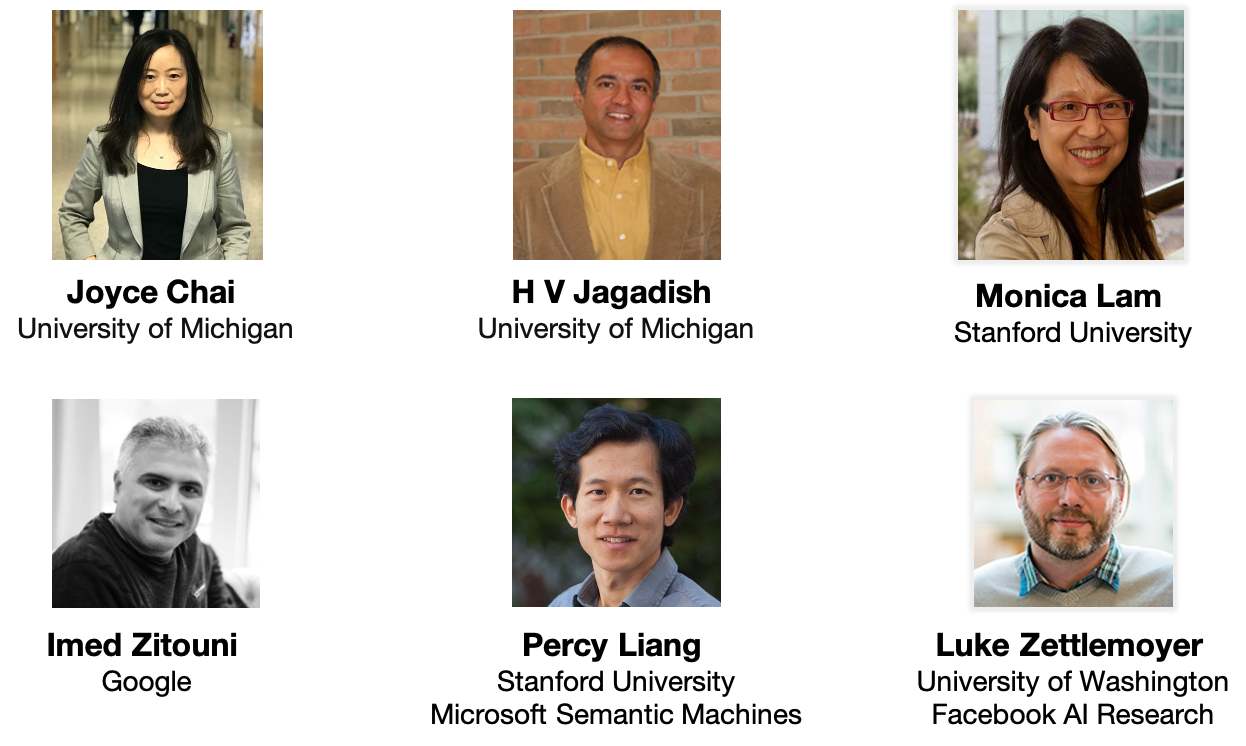

Invited Speakers

Details about the speakers and talks can be found here.

Topics

This workshop aims to bring together researchers and practitioners from different communities related to NLIs. As such, the workshop welcomes and covers a wide range of topics around NLIs, including (non-exclusively):

- Linguistic analysis and modeling. What are the linguistic characteristics of human-machine interaction via NLIs? How to develop better models to accommodate and leverage such characteristics?

- Interactivity, continuous learning, and personalization. How to enable NLIs to interact with users to resolve the knowledge gaps between them for better accuracy and transparency? Can NLIs learn from interactions to reduce human intervention over time? How can NLIs (learn to) be customized and adapt to user preferences? Interaction design, faithful generation, learning from user feedback, online learning.

- Data collection and crowdsourcing. Modern machine learning models are data-hungry while data collection for NLIs are particularly expensive because of the domain expertise needed for formal meaning representation and grounding. How to collect data for NLIs at scale with low cost?

- Scalability, adaptability, and portability. How to construct NLIs that can reliably and efficiently operate at a large scale (e.g., on billion-scale knowledge graphs)? How to construct NLIs that can simultaneously support multiple inter-connected domains of possibly different nature? How to transfer knowledge learned from existing domains to help learning in new domains?

- Explainability and trustworthiness. How to make the reasoning process and the results explainable and trustworthy to users? How to help users understand how an answer is obtained or a command is executed?

- Privacy. How to ensure NLIs are compliant with privacy constraints? How to train, monitor, and debug NLIs within the compliance boundary?

- Evaluation and user study. How to systematically evaluate different usability aspects of an NLI as perceived by users? What are the protocols for conducting a reproducible user study? Whether there is significant gap between in vitro and in vivo evaluation and how to bridge that?

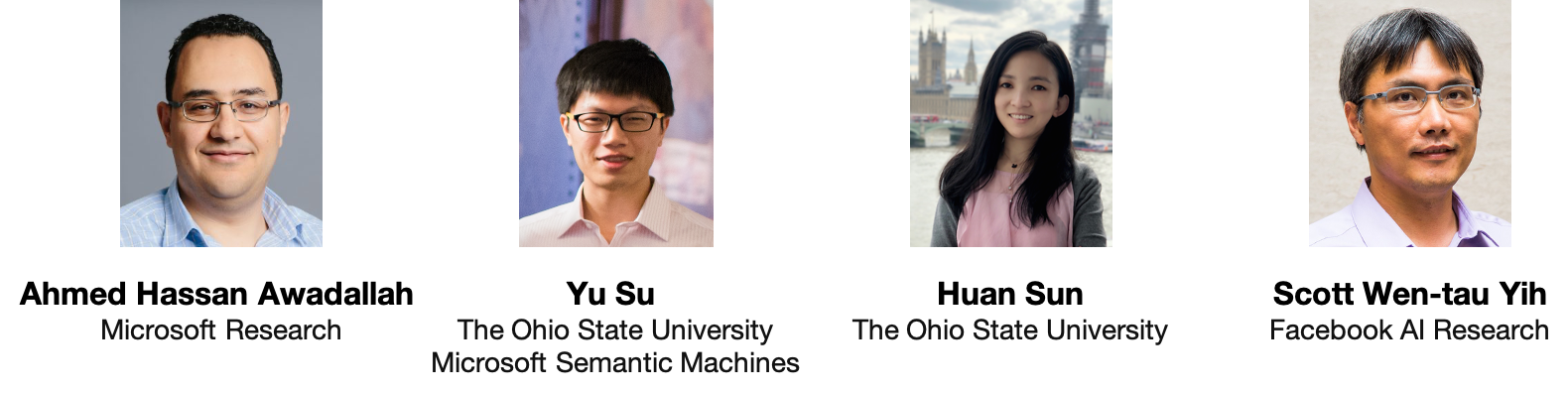

Organizers

The workshop is organized by Ahmed Hassan Awadallah (Microsoft Research), Yu Su (OSU/Microsoft Semantic Machines),Huan Sun (OSU), and Scott Wen-tau Yih (Facebook AI Research).

For any questions, please email nliacl2020@gmail.com

Location

NLI 2020 will be held online, co-located with ACL 2020. The virtual workshop page is here (conference registration required).